Anthropic Claude Mythos: The Dawn of the Autonomous Cybersecurity Era & Risks To SMEs

Anthropic’s Claude Mythos AI has triggered alarm across industries. Is modern digital architecture ready for the disruption?

Opinions expressed by Entrepreneur contributors are their own.

You're reading Entrepreneur India, an international franchise of Entrepreneur Media.

Anthropic is on a roll, releasing a string of ‘category killers’. From digging the grave for conventional IT firms (read SaaSocalypse) to dominating content creation, its purview keeps expanding week after week. Yet, nothing has made the industry squirm quite like Claude Mythos.

What is Claude Mythos?

Last month, Anthropic unveiled Claude Mythos Preview, a general-purpose language model focused on cybersecurity tasks.

Anthropic researchers disclosed that Mythos Preview was capable of identifying and then exploiting zero-day vulnerabilities in every major operating system and major web browser if directed by a user to do so.

It is capable of identifying vulnerabilities that are typically difficult to detect. In one of the cases, Mythos discovered a now-patched 27-year-old bug in OpenBSD, an operating system known for security features. Essentially, it is also capable of finding vulnerabilities classified as ‘zero-day’—a security flaw that is unknown to the vendor or developers and requires immediate action to fix.

“Non-experts can also leverage Mythos Preview to find and exploit sophisticated vulnerabilities. Engineers at Anthropic with no formal security training have asked Mythos Preview to find remote code execution vulnerabilities overnight, and woken up the following morning to a complete, working exploit. In other cases, we’ve had researchers develop scaffolds that allow Mythos Preview to turn vulnerabilities into exploits without any human intervention,” researchers noted.

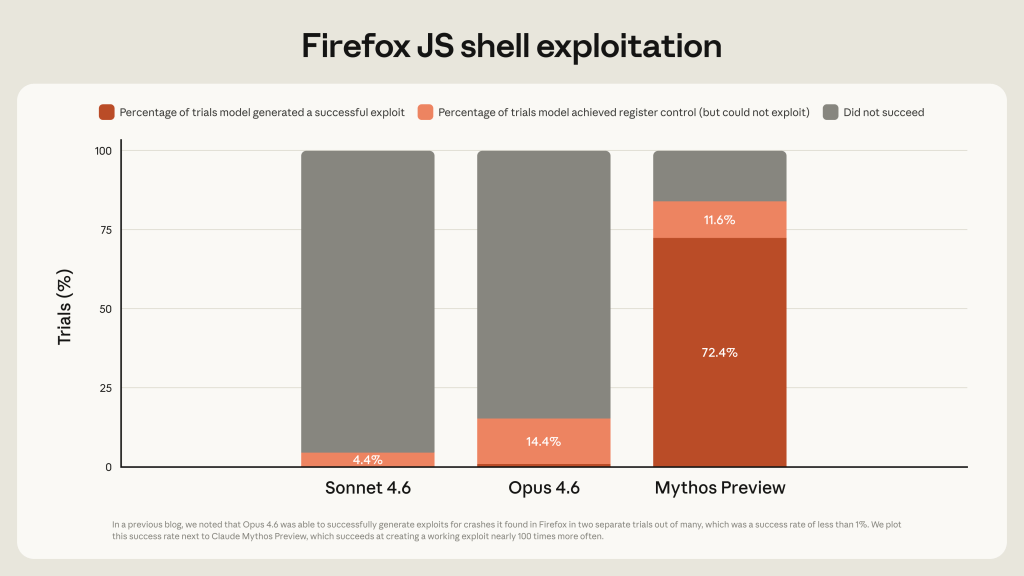

“Last month, we wrote that “Opus 4.6 is currently far better at identifying and fixing vulnerabilities than at exploiting them.” Our internal evaluations showed that Opus 4.6 generally had a near-0% success rate at autonomous exploit development. But Mythos Preview is in a different league,” they noted.

It is important to note that Mythos is not an out-of-the-blue suite. The company had been cautioning about the increasing cyber capabilities of AI.

Its previous models, such as Claude Opus, which is widely available, were capable enough to detect more than 500 vulnerabilities classified as “high-severity” in open-source software.

Source: Anthropic

As mentioned above, Mythos is just a Hulked-up version of what they had accomplished thus far. This is why researchers put the model in a “different league”. Moreover, it is highly plausible that bad actors can leverage previous models to conduct attacks.

Clearly, Mythos’ prowess is unprecedented, making it appear nothing is off-limits if it is chosen to be deployed. Alarming enough?

For your own good

Like a good parent who would not allow their underage children to drive, Anthropic chose not to make a wide release of Mythos, and in its own words: “Mythos Preview has already found thousands of high-severity vulnerabilities, including some in every major operating system and web browser. Given the rate of AI progress, it will not be long before such capabilities proliferate, potentially beyond actors who are committed to deploying them safely. The fallout—for economies, public safety, and national security—could be severe.”

To contain its own creation, Anthropic has come up with Project Glasswing: an initiative that brings together major tech platforms Amazon Web Services, Anthropic, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks.

Under Project Glasswing, Anthropic says it will share its learnings and has extended access to a group of more than “40 additional organizations that build or maintain critical software infrastructure so they can use the model to scan and secure both first-party and open-source systems.”

Clearly, Anthropic is cognizant of the risk potentials of the AI suite. But is this going to be enough? Probably, the right question is how long before we have the first misuse of it? Also, critics say that select access to the model is an indicator these companies are becoming new-age gatekeepers.

Isn’t that concerning!

The severity of Claude Mythos can be gauged from the reactions it has drawn globally.

In the US, the Trump government is reportedly considering new oversight for future models before they are made publicly available. Canada Finance Minister François-Philippe Champagne told the BBC that the AI model was brought up for discussion at an International Monetary Fund (IMF) meeting in Washington.

The European Union has also acknowledged the development. According to a report, the EU is also looking to have access to AI as it is limited to select American companies. It, however, is relying on regulation for preparedness.

“Once the enforcement powers of the AI Office start in August 2026, we will ensure to receive, if needed, model access,” an EU spokesperson told Politico.

Back in India, Finance Minister Nirmala Sitharaman held meetings with several top bankers and financial sector executives to discuss the risks from AI models like Claude Mythos.

The conversation, according to reports, revolved around cybersecurity preparedness for banks and critical financial infrastructure against new-age threats. Reports add that the Reserve Bank of India and the Indian Computer Emergency Response Team (CERT-In) also participated in the meeting.

According to an IBM report, the global average cost of a data breach is USD 4.4 million, which is down by 9% from the previous year as systems become better at identification and containment. Interestingly, 97% of organizations reported an AI-related security incident and lacked proper AI access controls. Moreover, 63% of organizations did not have AI governance policies to manage AI or contain the proliferation of shadow AI.

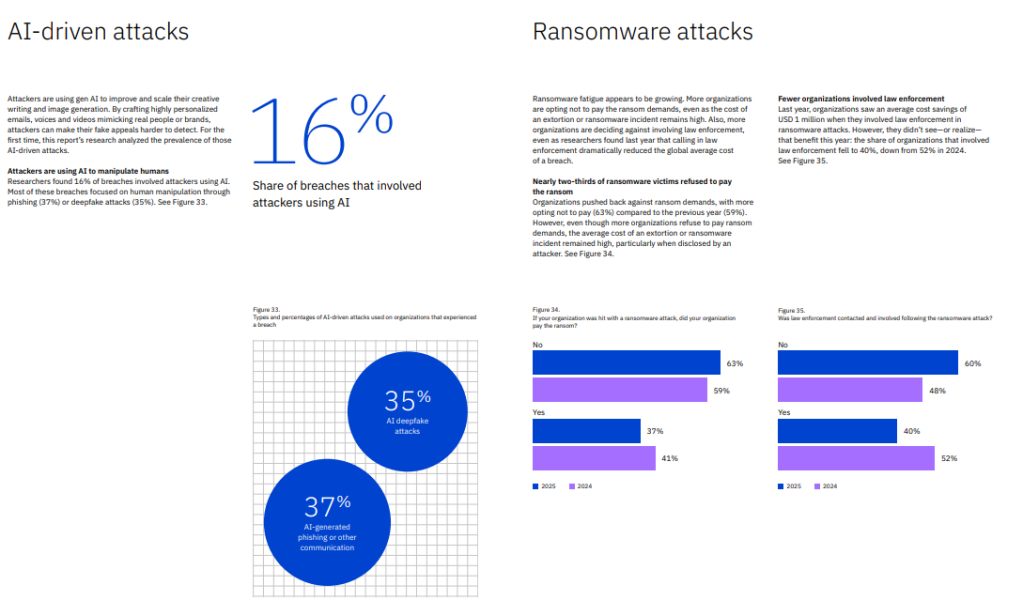

“Attackers are using gen AI to improve and scale their creative writing and image generation. By crafting highly personalized emails, voices and videos mimicking real people or brands, attackers can make their fake appeals harder to detect. For the first time, this report’s research analyzed the prevalence of those AI-driven attacks,” the report noted.

Source: IBM

For India, the IBM report says the average cost has gone up to USD 2.51 million in 2025 from USD 2.35 million in the previous year.

Rewriting rulebooks

Not that the global cybersecurity landscape has not been evolving at a brisk pace. A number of security companies have already begun embracing AI. But the kind of exponential upgrade Mythos brings is set to disrupt the entire landscape, prompting enterprises to look at security through a whole new lens.

“The real shift brought by Mythos is not simply the emergence of a “new type of attack.” It is the dramatic compression of the time window between discovery and exploitation. Work that once required human experts to inspect systems one by one can now move toward machine-scale scanning and testing,” Alex Chenglin Wu, founder and CEO of DeepWisdom, launched by OpenManus, a VC-backed AI coding startup, told Entrepreneur.

“If both attackers and defenders have access to these capabilities but defenders still rely on human approval chains, manual testing, and slow remediation processes, attackers will have a natural time advantage. This means defenders also need to learn how to use AI’s capabilities effectively, which helps detect vulnerabilities faster and prioritize, contain, and remediate them at machine speed.”

Apeksha Kaushik, Sr Principal Analyst at Gartner, chips in, saying that Claude Mythos marks a tipping point where AI can weaponize vulnerabilities faster than organizations can react. The measurements of security in use today do not work in a world where bad actors can use Anthropic’s Claude Mythos to find vulnerabilities in minutes.

“It’s not a new threat, but Mythos introduces a new level of speed to the weaponizing technology that can autonomously find vulnerabilities. The speed and capability of Mythos to find and exploit unknown issues make it vital that CIOs stop measuring vulnerability patching and start measuring how fast the vulnerability can be found and how quickly it can be isolated or mitigated. It is a structural shift in cyber-risk dynamics,” she said.

Kaushik added: “… With the introduction of Mythos, enterprises face a severe remediation backlog that outpaces their internal engineering capacity to absorb updates driven by Common Vulnerabilities and Exposures (CVE). Security product leaders cannot rely on established reactive approaches and must proactively pivot toward autonomous cyber immune systems.”

Expert Systems

Between Anthropic’s Project Glasswing and OpenAI’s Trusted Access for Cyber (TAC), we are now seeing a remarkable change wherein focus is moving away from generalist LLMs to expert AI models.

Meetu Malhotra, data analytics principal at S&P Global, tells Entrepreneur that we are indeed entering a phase where enterprises are moving towards “expert” systems, meaning a strong model (system) built on top of a base model that works on domain-specific data and utilizes tools and guardrails.

Most organizations and research groups cannot train a large language model from scratch because it demands massive computational resources, huge datasets, and significant engineering effort and cost.

So instead of reinventing the wheel, they start from an existing base model that is already trained on broad, general data. On top of this general model, they then apply fine-tuning to adapt the model to their own domain and tasks (e.g., healthcare, finance, cyber, legal), using much smaller, targeted datasets.

“In this view, the base LLM is a generalized reasoning engine, and domain specific models are specialized overlays built on top of it. Another major way to specialize general models is RAG (Retrieval Augmented Generation), where you don’t change the model, but instead connect the model to your internal documents, code, or databases so that it “looks up” relevant information before answering. This both reduces hallucinations and helps control costs, because you get high quality answers grounded in your own data without needing full retraining. A further application is agents, the systems that use a general LLM as the “brain” and work along with tools, memory, and workflows so that agents can plan, call APIs, and act within a specific environment. Together, fine tuning, RAG, and agents illustrate that the future isn’t about replacing generalist LLMs, but about using them as a flexible core that powers many specialized, high value systems,” she said.

“According to a study, by around 2028, over half of enterprise GenAI models in production will be domain specific, trained or adapted for a sector . On top of that, we’re increasingly wrapping these domain models in industry specific guardrails. Guardrails are not just content filters; they are policies, checks, and controls that enforce safety, compliance, and data privacy rules for a particular sector,” she added.

ALSO READ: TIS 2026: Cybersecurity & Governance: Securing the Digital Enterprise

As far as guardrails go, experts believe large language models are powerful but not sufficient without guardrails in place. Guardrails are the rules and policies that define how you interact with LLMs. Guardrails sit between the model and the real-world workflow, making it safe enough to use in regulated and high-stakes environments.

“In healthcare, for instance, you do not want users casually pasting patient PII into a chat box. There must be input guardrails that warn or block users from sharing sensitive data, and ideally automatically redact any such PII info. Likewise, on the output side, guardrails are needed to check that the model’s answers are grounded, compliant, and not fabricated (aka hallucinated) before they reach the end user – in this case clinicians or patients. A perfect example of what happens when you don’t put proper guardrails in place is Chipotle’s support bot. Even Chipotle’s support bot was caught writing Python code to reverse a linked list instead of just taking burrito orders, showing how a domain product built on a general LLM can easily drift outside its intended use when guardrails are not in place. In practice, the useful system is not “just an LLM,” but an LLM plus these domain aware guardrails around data, prompts, and responses,” Malhotra added.

Wu further explains that AI has moved beyond being a coding assistant that helps developers write code. It is becoming a new class of system capable of competing with some of the world’s top security researchers.

Cyber defense and cyber offense are two sides of the same coin. If Mythos can reason about vulnerabilities at the level of elite security researchers, it also implies the potential to generate offensive capabilities at a similar level. In the future, security researchers will not only be competing with human attackers; they will also have to defend against AI attackers that can search, test, and exploit weaknesses at machine speed.

What about SMEs?

Large enterprises across sectors, including banking, IT, or corporates with deep pockets, are probably in a better position to cope with the changing cybersecurity landscape where AI-driven cyberattacks could be the new normal. But what about companies with a smaller scale and budget?

Citing data from the Indian SME Forum, CERT-In, and DSCI Industry Insights, a June 2025 report from Prime Infoserv states that nearly 74% of Indian SMEs have suffered at least one cyberattack in the last year. Interestingly, nearly 60% of affected SMEs were unable to recover fully, which even led to the shutting down of the business altogether. Just 13% of Indian SMEs have a formal cybersecurity policy.

Clearly, SMEs in India, and likely around the world, are at a disadvantage in the above-mentioned new normal. Industry experts have time and again called for the democratization of such security tools, especially for smaller enterprises.

Wu tells Entrepreneur, “In the short term, there is a real security gap: the most advanced AI defense tools are still built mainly for large enterprises and critical institutions. Over the medium- to long-term, however, AI security should become more accessible to SMEs.”

He also proposes that cloud providers embed AI defense into their core services, allowing smaller companies to benefit without having to buy standalone tools. Open models and lightweight AI agents will also help SMEs automate basic security operations at a lower cost. Regulation and industry standards will further push the market toward broader, more democratic access to baseline AI security, he added.

Tarun Wig, co-founder and CEO of Innefu Labs, adds that the next few years are definitely at risk of a growing cybersecurity gap, as large enterprises and governments have substantial budgets to invest in advanced AI defense tools.

But, over the course of time, AI can be a great equalizer for small and medium enterprises. Cloud-based AI security solutions, automated threat monitoring, and managed detection services continue to make the world of advanced cybersecurity more accessible.

“The true promise is in making cybersecurity skills more accessible, enabling smaller organisations to discover vulnerabilities, track threats and react swiftly without the need for a big security workforce, with the assistance of AI copilots. The long-term winners will be SMEs who embrace AI and not be daunted by its complexity,” he told Entrepreneur India.

Summing it up,

To conclude, Anthropic’s new AI tool has definitely set alarm bells ringing, though given the progress of AI in the last couple of years, and what Anthropic has been up to, it’s definitely not surprising.

For AI enthusiasts, the prowess is impressive but a general consensus is that the global digital architecture is not ready for a tool that could automate such intricate cybersecurity tasks – autonomously identifying and exploiting zero-day vulnerabilities. The Project Glasswing adds another layer of complexity in the current geopolitical situation.

While global regulators and large enterprises are scrambling to deploy equal or better defense mechanisms, it’s important to also note that smaller entrepreneurs are now at high risk as cyberattackers could look to exploit the widening tech divide.

Anthropic is on a roll, releasing a string of ‘category killers’. From digging the grave for conventional IT firms (read SaaSocalypse) to dominating content creation, its purview keeps expanding week after week. Yet, nothing has made the industry squirm quite like Claude Mythos.

What is Claude Mythos?

Last month, Anthropic unveiled Claude Mythos Preview, a general-purpose language model focused on cybersecurity tasks.